People in the information technology profession used to say things like “let’s build a Beowulf cluster” to figure out issues, and now they say things like “let’s utilize Hadoop” to address problems with vast amounts of data. The world of big data is becoming more complicated as a result of digitization and technological advancement, and the huge volumes of data that are being generated on a daily basis are clogging up the servers. Substantially, Hadoop came to the rescue and began processing large amounts of data in real time. Prior to Hadoop, data storage was prohibitively costly, but this is no longer the case. We have mentioned some main points on why Hadoop is here to superharge your businesses:

A Brief Overview Of Hadoop Is Provided Below:

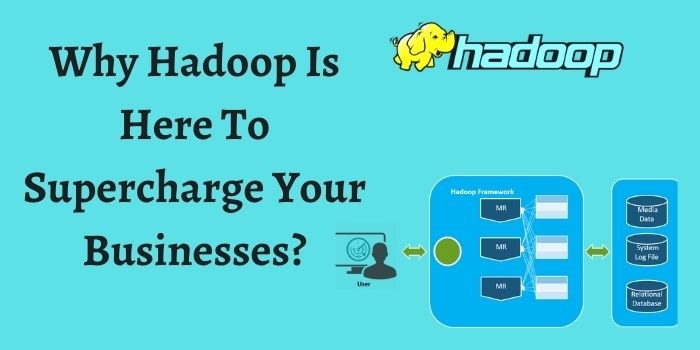

Hadoop is a free and open-source framework that was created in 2005 to handle large amounts of data. It is used for the collection and analysis of large amounts of data in a distributed way across large clusters of commodity hardware. With Apache Hadoop’s commodity technology, storing and processing big datasets is not only cost-effective, but it is also very efficient.

When a batch process is run in parallel, the efficiency of the process may be determined. There is no requirement for data to be sent over the network to the central processing node in this configuration. In other words, it is the process of breaking down large issues into smaller problems and answering each one individually, then integrating the results of each to come up with a complete apache Hadoop development services solution at the end. The cost-effectiveness of a solution may be determined by evaluating it against commodity hardware. The big datasets are partitioned and stored on local drives of reasonable capacity.

As a Data Storage Component, HDFS Is a Good Choice

As a pioneering big data solution, Apache Hadoop became the sole option for enterprises that got a head start on big data by using it early and often. In addition, they were able to cope with enormous files – since HDFS was capable of breaking them down into smaller pieces and distributing them over several nodes – by storing structured, unstructured, and semi-structured data in diverse forms (records, videos, texts, and so on). After a period of time, several new technologies emerged, and the evolutionary growth of big data necessitated the transition of enterprises from conventional ETL to ELT procedures, resulting in the emergence of the data lake idea. In addition, how did Hadoop do in terms of keeping up with the times? It was, without a doubt! According to the findings of the survey, apache Hadoop services are used by many businesses and it is selected as the technology of choice for constructing a data lake by the vast majority of respondents.

Know The Benefits of Apache Hadoop Services For Businesses

Apache Hadoop services make use of parallel processing methods to divide processing over numerous nodes in order to increase the speed of the processing. As an added benefit, it can analyze data on-site rather than having to send it over the network.

Aside from the well-known advantages, Apache Hadoop offers a slew of other advantages that aren’t usually immediately apparent. Let’s keep reading below!

It Has The Ability To Be Scaled

The increase in the generation and collecting of data is often cited as a bottleneck in the analysis of Big Data. Many businesses are confronted with the difficulty of maintaining data on a platform that provides them with a single, consistent perspective. Apache Hadoop clusters are a highly scalable storage technology that is easy to scale. It has the capability of storing and distributing datasets over hundreds of low-cost servers. Additionally, it provides the option of growing the cluster by adding additional nodes. This enables organizations to run programs on thousands of nodes and deal with thousands of terabytes of data without the need for additional hardware.

Economically Advantageous

A highly cost-effective option for increasing the size of datasets has been shown by Hadoop clusters. As a result, it is built as a scale-out architecture that can cost-effectively store all of a company’s data for later retrieval. This saves a significant amount of money while also significantly increasing storage capacity.

It is Very Dependable And Highly Available

Hadoop is a platform that is very fault-tolerant. HDFS distributes three copies of the full file across three computer nodes, ensuring that even if one of the nodes goes down, the other two copies are still available.

Impossible

The data is stored in an infallible manner by the cluster machine. This is allowing to the fact that data is replicated across the cluster. It is advantageous in the event of a machine breakdown or while the machine is down.

Responsibility To Fix

It manages to adapt in response to changes in the environment, wherein by default two clones of each block are stored throughout the whole cluster.

Ascendable

Hadoop is capable of being scaled horizontally. New nodes can be simply added on the fly without the need to shut down the system.

Exceptional Presence

The failure of a machine has no effect on the availability of data. This is owing to the fact that numerous copies of data are stored.

Read More: Hostgator 1 Cent